Restful APIs has become a standard today to centralize all data management and complicated business logic that MVC monolith applications are struggling to handle. The separation between frontend (client code) and backend (Database, hard logic) facilitates a lot to operate with different components at same time, one backend to different client apps like iOS, android, web and others. Supposing and increase of interoperability for development team. Many languages and framework community are implemented their own version of restful APIs, the today’s turn is node.js.

Node.js has been the one of the top languages for many years leading the board of StackOverflow survey 2024. Has been adopted for many companies as their primary language.

But what make it special?

The node.js event loop implemented with the v8 engine, facilitate a lot of work when real-time applications need to be developed. Even many languages are being “node.js-fy” by implementing similar features in their engine to handling asynchronous process. Here a few uses cases where node.js has working excellent:

- Streaming

- Chat applications

- Real-time reports

- Dashboards

At the time of writing this article, node.js is used with Typescript with the finality of solve some paint issues that node.js has been arising, but they are solving with each version launched. In the today world of development, we constantly use 3rd party libraries, frameworks and some other tools that facilitate the RestAPIs development, the open source market for are immense and sometimes overwhelming.

Why not use node.js HTTP server directly

We definitely work with the HTTP module directly to attend a request and send a response. For a few endpoints are ok, problems happens when the application becomes bigger even with AdaptNotes application.

The criteria I recommend choosing a Framework can be divided in 2 categories:

- High level details:

- Active community

- Addressing Security issues path

- Tools that make you live easier:

- Comprehensive documentation

- Easy configuration

- Good integration with OpenAPI spec (aka swagger): A way to expose interactive documentation of RestAPIs

I choose Nest.js Framework because it is widely used and matches the expectations of the series. In subsequent articles I demonstrate that changing between frameworks cannot be dramatic.

Installing necessary tools

The node.js ecosystem is bigger including their own package manager, today exists npm, yarn, pnpm. We will choose npm and LTS version of node. Installation page

Having node.js installed at your computer next thing is to open a terminal window, if your IDE has integrated it, nice!

All code will be uploaded in a Github repository.

Using typescript as First Citizen

In this series we implement the First Citizen approach with typescript to minimize the external frictions with external libraries and make typescript and Node.js the protagonism of the backend.

Our first command will be to create a new folder.

mkdir -p adaptable-backend-nodeGo to the directory:

cd adaptable-backend-nodeStart and project

npm initThe terminal prompt will ask four a few inputs text, fill with the data that you want, typing the text and press enter.

This utility will walk you through creating a package.json file.It only covers the most common items, and tries to guess sensible defaults.

See `npm help init` for definitive documentation on these fieldsand exactly what they do.

Use `npm install <pkg>` afterwards to install a package andsave it as a dependency in the package.json file.

Press ^C at any time to quit.package name: (adaptable-backend-node) adaptable-backend-nodeversion: (1.0.0) 0.0.1description: An adaptable backendentry point: (index.js)test command:git repository:keywords:author:license: (ISC)About to write to /adaptable-backend-node/package.json:

{ "name": "adaptable-backend-node", "version": "0.0.1", "description": "An adaptable backend", "main": "index.js", "scripts": { "test": "echo \"Error: no test specified\" && exit 1" }, "author": "", "license": "ISC"}

Is this OK? (yes) yesConfigure node.js version

This step is crucial to maintain a consistent version to avoid bug and failures between node.js version. Add engines in package.json

{ "name": "adaptable-backend-node", "version": "0.0.1", "description": "An adaptable backend", "main": "index.js", "engines": { "node": ">=23.x" }, "scripts": { "test": "echo \"Error: no test specified\" && exit 1" }, "author": "", "license": "ISC"}Assuming you install node.js 23, add the 23.x version number.

Configuring typescript file tsconfig.json

There are many options where you can follow up that match with your requirements, here an example of the typescript config will be set in the project:

{ "compilerOptions": { "baseUrl": ".", "outDir": "./dist", "target": "es2023", "lib": [ "es2023", "dom" ], "typeRoots": [ "./node_modules/@types" ], "types": [ "node" ], "module": "commonjs", "moduleResolution": "node", "experimentalDecorators": true, "emitDecoratorMetadata": true, "sourceMap": true, "declaration": false, "allowSyntheticDefaultImports": true, "paths": { "src/*": ["src/*"] } }, "include": [ "src/**/*.ts" ], "exclude": [ "node_modules", "./public", "dist", "test" ]}Installing libraries

In the npm ecosystem exists 2 dependency types:

- Development dependencies: Used for local development environment

- Production dependencies: Used to generate the artifact executable.

Installing development dependencies:

npm install --save-dev @nestjs/cli @types/node tsconfig-paths typescript ts-node| Package | Description |

|---|---|

| @nestjs/cli | Command-line interface tool for Nest.js that provides commands to generate, build, and manage applications |

| @types/node | At typescript not all packages are defined their own types, the idea behind @types/ packages is to provide types that work on node.js in this case for node.js |

| tsconfig-paths | Imports at node.js can be a mess, this utility helps to have meaningful imports’ |

| typescript | The typescript library to transpilation (compile) from typescript syntax to plain node.js |

| ts-node | Facilitate of running typescript files locally without transpilation, ideally for local task |

A few production dependencies

npm install @nestjs/core @nestjs/platform-express @nestjs/swagger reflect-metadata| Package | Description |

|---|---|

| @nestjs/core | The core of nest.js framework |

| @nestjs/platform-express | Nest.js are built with the idea of be a replacement of express.js and fastify, it has a good support |

| @nestjs/swagger | Contains all necessary tools to generate swagger documentation automatically |

| reflect-metadata | The meta-programming or reflection support on typescript are in an ongoing improvement, nest.js requires that dependency, and we will use it in further articles |

Now we have a few tools to start creating the rest API, despite the boilerplate code that nest.js documentation provides and some yeoman, cursor generators, the idea is to follow up incremental process to create every piece of the backend, let’s continue with nest-cli.json config:

Create a new file with name nest-cli.json or copy the content of the file:

{ "language": "ts", // typescript ts, for brevity "collection": "@nestjs/schematics", // make reference to the previously package installed "sourceRoot": "src", // our code will reside on src folder "monorepo": true, // All structure on nest.js modules are monorepo "compilerOptions": { "plugins": [ { "name": "@nestjs/swagger/plugin", "options": { "dtoFileNameSuffix": [ ".dto.ts" // This step are important to show models on swagger ], "classValidatorShim": false } } ] }, "projects": { "restAPI": { // We call restAPI, because in the future we can have [Technology-name]API "type": "application", "entryFile": "main", "sourceRoot": "src/apps/restAPI" } }}Creating the folder structure

Having the CLI runner specification that nest.js requires, let’s create this folder structure:

mkdir -p src/apps/restAPI/frameworks/nestjs/restControllersmkdir -p src/coreNest.js and other frameworks are based in MVC pattern to separate concerns, but they call Controllers for every endpoint router, in this series we will refer them as RestControllers because is a good practice and remind us the Rest technology can change to another.

Let’s create our first DTO (note.dto.ts) inside restController, the suffix .dto.ts is because on the nest-cli.json will find all files with that suffix to render them in swagger docs.

export class NoteDto { id: number; content: string;};In the folder src/apps/restAPI/frameworks/nestjs/restControllers, create a new file noteRestController.ts with this content:

import { Controller, Get } from "@nestjs/common";import { ApiOkResponse } from "@nestjs/swagger";

import { NoteDto } from "./note.dto.ts";

@Controller("notes")export class NoteRestcontroller {

@Get() @ApiOkResponse({ type: [NoteDto], description: "Get all notes", }) public getNotes(): NoteDto[] { return [ { id: 1, content: "Hello 1" }, { id: 2, content: "Hello 2" }, ]; }}We are importing the Decorators from @nestjs/common for these purposes:

- Controller: Help us to identity the class NoteRestController will contain all method implementations and will redirect the incoming requests, the parameter string “notes” facilitates the routing.

- Get: Tell to nest.js framework the route and method will be a Restful Get verb.

For @nestjs/swagger we are importing the ApiOkResponse to tell swagger doc generation the type of the response, defined previously on NoteDto class, which normally receive the type and description to express the intention of the endpoint we are building.

Finally, there is a getNotes() method implementation using sample data. Having our first controller we can proceed to create the server instance provided by nest.js to start creating our first endpoint.

Creating the Route that will contain all RestControllers grouped, it depends on the approach or how much larger the app is. You can create many Routes as needed, which the nest.js team calls Module. To guarantee separation of concerns is good practice, split your components (previously explained in the introduction article). In this opportunity I created one Route, and we’ll see in the next articles how we can improve modularization.

import { Module } from "@nestjs/common";

import { NoteRestController } from "./restControllers/noteRestController";

@Module({ controllers: [ NoteRestController ],})export class Route {}In this opportunity we only import the Module from @nestjs/common to group the list of RestControllers. Next, we’ll create the server factory and swagger configurations in the nest.js folder to make the correct render possible according to nest.js guidelines:

import { NestFactory } from "@nestjs/core";import { INestApplication } from "@nestjs/common";import { SwaggerModule, DocumentBuilder } from "@nestjs/swagger";

import { Route } from "./route";

export class NestServerFactory { static async create(): Promise<INestApplication> { const app = await NestFactory.create(Route);

this.setupSwagger(app);

return app; }

private static setupSwagger(app: INestApplication): void { const config = new DocumentBuilder() .setTitle("AdaptNotes API") .setDescription("The AdaptNotes API documentation") .setVersion("1.0") .build();

const document = SwaggerModule.createDocument(app, config); SwaggerModule.setup("docs", app, document); }}- NestFactory: Helps to create the application server given the Routes or other middleware configurations that will contain the server initialization.

- INestApplication: The interface declaration of nest.js to keep everything typed as much as possible.

- SwaggerModule: The swagger helper module that will generate the swagger docs, given the parameters “docs”, the application server (app), and the setup and configuration made by the DocumentBuilder class.

- DocumentBuilder: This is a programmatic way to set up all configurations of swagger (openapi) documentation.

Having the minimal setup of the application, the next step is to make it runnable in the context of the restAPI application. Creating the Server instance will take care of this:

import { NestServerFactory } from "./frameworks/nestjs/nestServerFactory";

export class Server { private static servers = { nestjs: NestServerFactory, };

private server;

async run({ port }: { port: number }) { const framework = "nestjs"; this.server = await Server.servers[framework].create(); await this.server.listen(port); console.info(`Server running on ${await this.getUrl()}`); }

async getUrl() { return this.server.getUrl() }

async close() { await this.server.close(); console.warn("Server closed"); }}The server class will encapsulate all the logic behind the scenes of backend framework operations like run(), getUrl(), and close(). I implemented a strategy pattern to choose (add links, wiki and refactoring guru) between frameworks. The servers attribute serves that purpose.

When we want to incorporate another framework or switch to another, you can add them in the servers object. Inside the run() function it receives an object as parameter configuration that allows us to change a port. The framework variable can be passed as configuration, so that flexibility can be performed by separating each framework as factory servers. With process.on(“SIGINT”) we are capturing any external call to close the server. This will be useful to detect any failure and send a notification in case something unexpected happens.

We need an entry point that defined in the nest-cli.json file called main which will run server.

import { Server } from "./server";

const server = new Server();server.run({ port: 3000 });

process.on("SIGINT", async () => { console.warn("Closing server"); await server.close();});One step more to have the initial rest API running, add a new command inside the scripts’ folder of package.json to run the restAPI in development mode which means, every change a live-reload server can perform the updates.

"scripts": { "start:restAPI:dev": "npx nest start restAPI --watch"}Now, in a terminal window, run:

npm run start:restAPI:devyou should see an output like this:

[1:48:39 PM] Starting compilation in watch mode...[1:48:42 PM] Found 0 errors. Watching for file changes.[Nest] 76467 - 07/14/2025, 1:48:42 PM LOG [NestFactory] Starting Nest application...[Nest] 76467 - 07/14/2025, 1:48:42 PM LOG [InstanceLoader] Route dependencies initialized +9ms[Nest] 76467 - 07/14/2025, 1:48:42 PM LOG [RoutesResolver] NoteRestController {/notes}: +16ms[Nest] 76467 - 07/14/2025, 1:48:42 PM LOG [RouterExplorer] Mapped {/notes, GET} route +3ms[Nest] 76467 - 07/14/2025, 1:48:42 PM LOG [NestApplication] Nest application successfully started +1msServer running on http://[::1]:3000Congrats, you have the restAPI up and running. The nest logs tell us line by line the following:

- LOG [NestFactory] Starting Nest application: The framework is running without errors.

- LOG [InstanceLoader] Route dependencies initialized: Our Route.ts file was loaded fine.

- LOG [RoutesResolver] NoteRestController /notes: The NoteRestController was loaded and the notes/ route is available to receive calls.

- Server running on http://[::1]:3000: This is the custom message we use when the server is running inside ServerFactory framework.

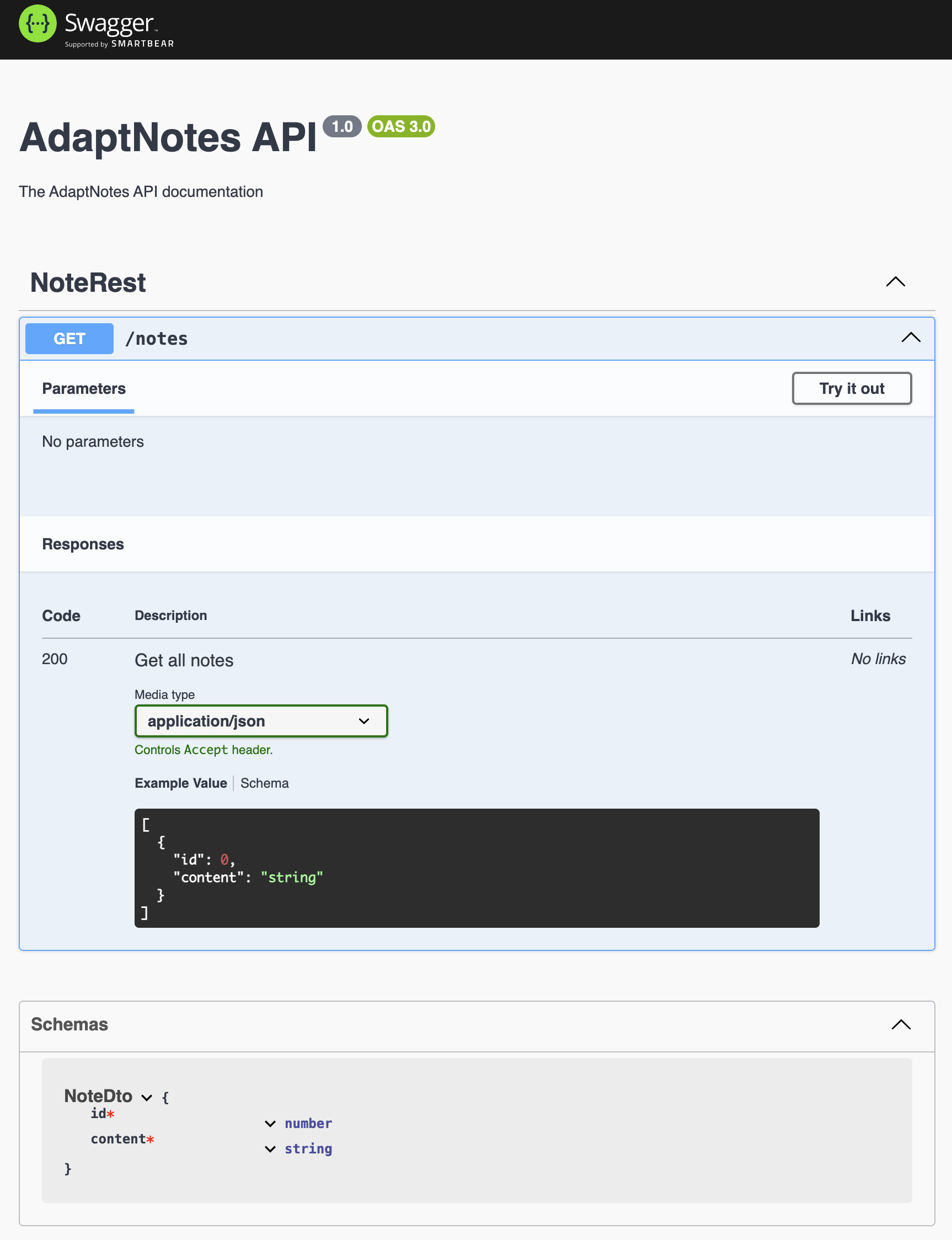

According to the nestServerFactory.ts file we specify in the SwaggerModule.setup(“docs”) line, “docs” as an entry point to see the swagger document. Open a new browser window and go to http://[::1]:3000/docs. You will see a page like this:

We can confirm all notes controller and routes are rendering well according to the previous setup for RestControllers.

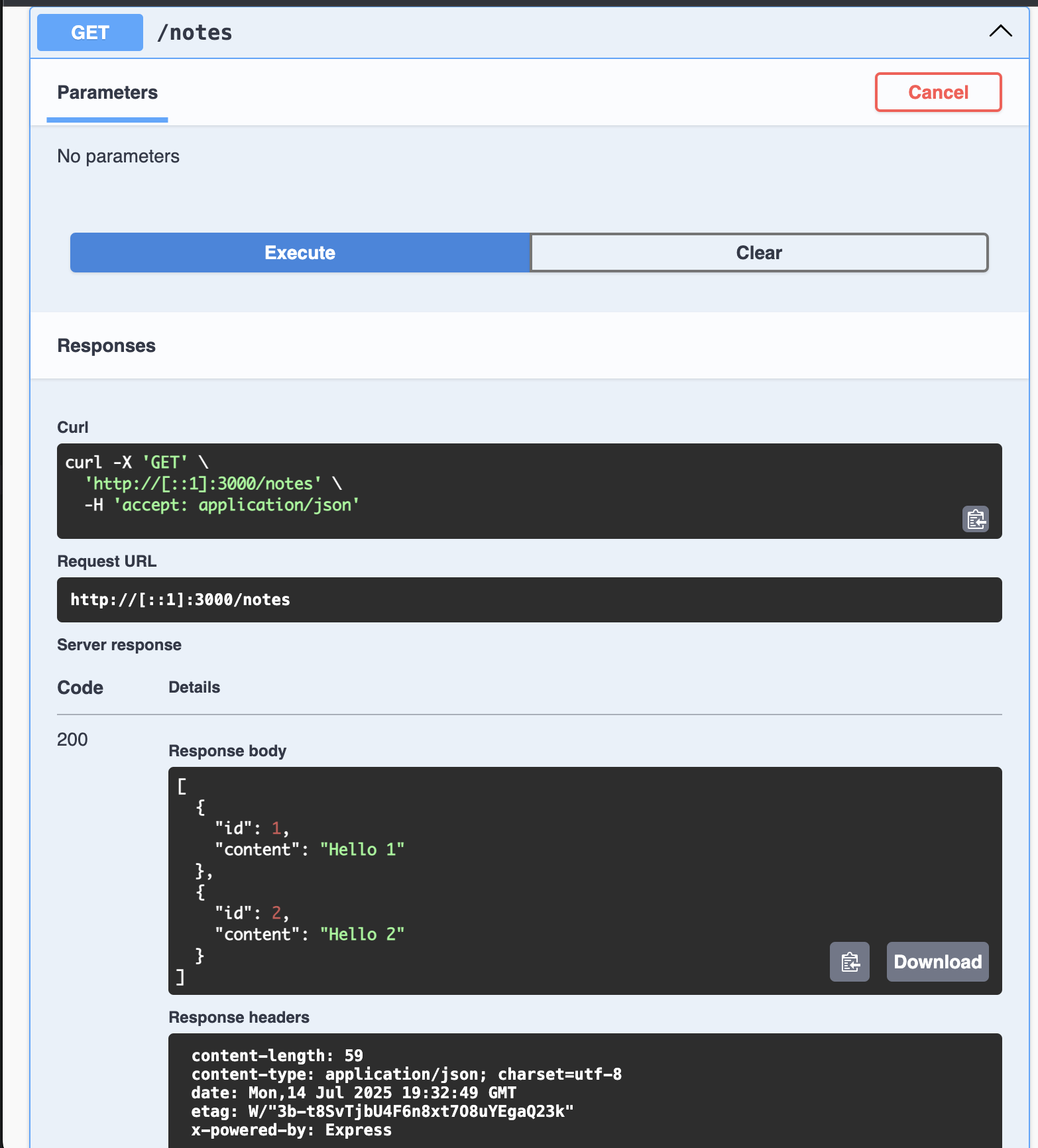

Try the method get Notes by pressing the button “try it out” and then execute the response:

It’s responding with the sample data as we configured.

At this point we have a very basic but flexible config that allows us to add features quickly using the framework.

Configuration: Environment variables

Continuing with the code, we are hard-coding a lot of values like port, swagger description, and other strings that can change in the near future, which can be tedious to change when requested. We can divide it into constants that rarely change and environment variables that are convenient to keep in the context of exportable variables. In our example, the swagger description. But the port is a number value that can be changed by the server, deployment, and orchestrator service that brings the restAPI to production environment. In the Node.js ecosystem there are many alternatives to handle environment variables, validate and more. In our case I intend to implement our Configuration that will be adapted to incoming needs, minimize the requested changes by using external libraries, and last but not least, node.js API for environment variables is good enough that it doesn’t require an external lib.

Responsibilities of configuration

- Load environment variables from .env file in the root folder which facilitates the development cycle to load config and test locally.

- Define which variables are required or not. Believe me when I say I spent 2-3 hours debugging a cold start error, when I only had a missing env-var that was required.

- Required env-vars should kill the server

Creating the configuration class

The Configuration class will load environment variables from a configuration file .env used in several conventions to declare and load env-vars in development mode. This can be extended to load a .env.test when unit tests will run. In any case, it’s better to set it up in the early stages.

The Configuration class will be a singleton pattern that contains, in execution time, all environments loaded and centralized. This avoids referring to process.env.NAME_VAR every time and enforces the types in typescript, which is desirable. Now we’ll create the .env file with the necessary key=value.

# This is a comment will be ignore, you can describe your envPORT=3001NODE_ENV=developmentNow we’ll create the configuration.ts file that will handle these responsibilities, creating some interfaces and types to keep everything typed.

import { readFileSync, existsSync } from "fs";import * as path from "path";

interface EnvConfig { NODE_ENV: "development" | "production" | "test"; PORT: number;}

type EnvVarConfig = { required: boolean; type: "string" | "number" | "boolean"; default?: string | number | boolean;};The readFileSync and existsSync functions are imported from FileSystem node module to read the file and check if the file exists. Remember, the restAPI cannot start if these minimal dependencies are not completed.

The interface EnvConfig helps us to give the name and type of each variable we want to control. The type EnvVarConfig allows us to give a schema to each environment.

export class Configuration { private static instance: Configuration; private readonly config: Map<keyof EnvConfig, string | number | boolean> = new Map();

private readonly envSchema: Record<keyof EnvConfig, EnvVarConfig> = { NODE_ENV: { required: true, type: "string" }, PORT: { required: true, type: "number" }, };

private constructor() { this.loadEnvFile(); this.validateConfig(); }

private loadEnvFile(): void { const envFile = process.env.NODE_ENV === "test" ? ".env.test" : ".env"; const envPath = path.resolve(process.cwd(), envFile);

if (!existsSync(envPath)) { console.warn(`Warning: ${envFile} file not found at ${envPath}`); return; }

try { const content = readFileSync(envPath, "utf8"); this.parseEnvContent(content); } catch (error) { console.error(`Error reading ${envFile}:`, error); } }} // Short for brevityWe are declaring the typical singleton instance of the same class, create a config map (for performance improvement) that will have any keys defined in EnvConfig. The envSchema variable object serves the purpose of defining the type of the schema, types, and if they are required or not.

In the constructor we have the calls loadEnvFile() and validateConfig(). Analysis for loadEnvFile():

- Load file depends on NODE_ENV setup when we will run the start command.

- Loading the complete path of the file.

- Load the content of the .env files as string.

- Checking if the file exists then call parseEnvContent().

private parseEnvContent(content: string): void { const lines = content.split("\n"); const envVars = new Map<string, string>();

for (const line of lines) { const trimmed = line.trim();

if (!trimmed || trimmed.startsWith("#")) continue;

const equalIndex = trimmed.indexOf("="); if (equalIndex === -1) continue;

const key = trimmed.slice(0, equalIndex).trim(); let value = trimmed.slice(equalIndex + 1).trim();

value = value.replace(/^(['"])(.*)\1$/, "$2");

envVars.set(key, value); }

for (const [key, value] of envVars) { if (!(key in process.env)) { process.env[key] = value; } }}- Receive the string content

- Split the content of the files into lines.

- Iterate on lines and check if there’s a comment ’#’

- Check the equality character to get the key and the value separated.

- Replace any char ’ or ” to clean the values.

- Finally, check if the env-vars already exist, so process.env takes precedence.

After loading env-vars from the file, the remaining step is to verify if the loaded envs into process.env match with the schema we defined.

private validateConfig(): void { const missingRequired: string[] = [];

for (const [key, schema] of Object.entries(this.envSchema)) { const envKey = key as keyof EnvConfig; const rawValue = process.env[envKey];

if (!rawValue) { if (schema.required) { missingRequired.push(envKey); continue; } if (schema.defaultValue !== undefined) { this.config.set(envKey, schema.defaultValue); continue; } }

const parsedValue = this.parseValue(rawValue!, schema.type); this.config.set(envKey, parsedValue); }

if (missingRequired.length > 0) { throw new Error(`Missing required environment variables: ${missingRequired.join(", ")}`); }}

private parseValue(value: string, type: "string" | "number"): string | number { if (type === "number") { const parsed = Number(value); if (isNaN(parsed)) { throw new Error(`Invalid number value: ${value}`); } return parsed; } return value;}- Iterate over envSchema

- Get key, and compare if it matches with the schema

- Check for default values, then ignore them

- parseValue() utility function to check if a value declared as number can be parsed to number.

Finally, the traditional getInstance() method from Singleton pattern and get(k) to get one value given a key

public static getInstance(): Configuration { return Configuration.instance ??= new Configuration(); }

// Getter methods with type safety public get<K extends keyof EnvConfig>(key: K): EnvConfig[K] { return this.config.get(key) as EnvConfig[K]; }Applying config

After implementing the load, validation and typed environment variables, it’s time to use it in the existing code.

In the main entry point of restAPI app, we’ll import the config and get the port number to avoid hard-coding.

import { Server } from "./server";import { config } from "src/core/configuration/configuration";

const server = new Server();server.run({ port: 3000 });server.run({ port: config.get("PORT") });

process.on("SIGINT", async () => { console.warn("Closing server"); await server.close();});Now we’ll run the server again. The port should change to 3001

npm run start:restAPI:devNow you’ve implemented your own dotenv like library that serves your purpose and validates if some of them are required or not.

Final adjustments

Assuming you will use a git system as I am using Github repo to upload the code, it’s required to ignore some dependencies and binary files generated in the development process by adding an official .gitignore to the project. There are other hard-coded things you can fix on your own. I upload all the code on this Github Repository branch of the article to work as next steps in coming articles.

Conclusion

In this series we explore an alternative way to use backend frameworks, since changing the structure of folder, conventions and learn how something simpler like load & validate environment variables can give you the flexibility to add interesting features without fall in the libraries fanatic installation that will incur in long-time refactoring and unfinishable maintenance.

In the next article we will start creating a separated way to use dependency injection that does not depend on frameworks, giving you all control.

- Have you tried this approach before?

- What do you think of the separation of concerns apply?

- Are you using the boilerplate that frameworks page provide?

Let me know in the comments.