In the previous article we created the foundations to implement a database engine, not matter what type of engine, the IRepository set the direction of how we can interact with the technologies and database engine. We implement the PostgreSQL but we could have used MySQL, and the differences would have been minimal, in this opportunity we will integrate the NoSQL approach to demonstrate that not only can we switch to a db engine to another, also that good practices, separations of concerns and software engineering fundamentals play an important role in the technical decisions can be awful at the beginning but it save a lot of time in the future.

Common Criticisms of NoSQL

NoSQL is the next replacement of SQL

Not really, NoSQL comes to play to start thinking the projects in terms of scalability and as an extension of Relational Model capabilities.

NoSQL work well with Node.js only because the asynchronous model

This myth was spread a couple years before, that was the beggining of MERN (MongoDB, Express, React, Node.js) as a definitive stack and the facilities between the technologies since all have in common the same language javascript, today we can found multiple languages like python and java are implementing mongodb.

NoSQL is slow

Poor queries and bad data modeling in NoSQL is an indicator of bad performance. Also if the modeling is not taking in consideration the metrics and real needs of the business, that will be painful.

NoSQL means No SQL anymore

The real meaning of NoSQL is Not only SQL or Not only relational, which mean that NoSQL comes to fill the existing gaps in the relational model, bringing flexibility, and design using the metrics of the application to set the business rules in order to optimize, store, retrieval and updates.

Free Resources to Refresh Your NoSQL(MongoDB) Knowledge

At the time this article is written mongodb.com company launched their own FREE course catalog to learn mongodb called MongoDB University I taken a few of their courses and I don’t regret it, I really recommend them if you want to learn seriously.

Is MongoDB use an ORM?

In the previous article we introduce the concept of ORM, in the case of MongoDB exists libraries and ORMs in node.js like mongoose. My opinion is the same, sometimes ORM are overkill, in particular when the mongodb node.js driver has everything we need.

Installing MongoDB using Docker compose

In this article I will use the MongoDB official database to start creating our CRUD of Note entity, the docker image package is on the mongodb_registry. The docker-containers will be managed with Docker Compose, which requires creating a compose.yml file. MongoDB docker image requires a SSL to start the database engine to keep everything Encrypted at Rest so we will extend their image using a docker file. To keep everything separated in the root folder lets create a folder containers/mongodb and the Dockerfile for mongodb extension.

The Dockerfile contains the next content:

FROM mongo:latestRUN openssl rand -base64 756 > /etc/mongo-keyfileRUN chmod 400 /etc/mongo-keyfileRUN chown mongodb:mongodb /etc/mongo-keyfileWhich run openssl command to generate the key that will use mongodb engine to start. Then we are able to use that docker image in the compose.yml

services: mongodb: build: context: ./containers/mongodb dockerfile: Dockerfile restart: always environment: MONGO_INITDB_ROOT_USERNAME: mongo MONGO_INITDB_ROOT_PASSWORD: mongo MONGO_INITDB_DATABASE: mongo ports: - 27017:27017 command: --replSet rs0 --keyFile /etc/mongo-keyfile --bind_ip_all --port 27017 healthcheck: test: echo "try { rs.status() } catch (err) { rs.initiate({_id:'rs0',members:[{_id:0,host:'127.0.0.1:27017'}]}) }" | mongosh --port 27017 -u mongo -p mongo --authenticationDatabase admin interval: 5s timeout: 15s start_period: 15s retries: 10 volumes: - "mongo_data:/data/db" - "mongo_config:/data/configdb" networks: - adaptable_backend_nodejs

volumes: mongo_data: mongo_config:We are defining the service mongodb which use the latest version of mongodb, set the variables MONGO_INITDB_ for credentials. Added networks to keep the application isolated, in case you have running other local services, volumes to keep the persistency even if we restart the machine, ports to forward to host machine (ours) and healthcheck a command that is quite complex, but it’s the only one I found to check health on mongodb.

Running the mongodb service

Running the command:

npm run prepare:devCheck in the docker desktop if the container mongodb is running, then you are ready for start building.

Integrate MongoDB to our node.js project

Following the guidelines of the project, the database nosql stuff will reside inside core/database/nosql folder with the purpose of handling all persistence layer there. To begin, install the official connector library for mongodb, I will use mongodb driver official, is good maintained and provide the majority of tools required.

In the previous article we introduce the structure and contract of persistence by using interfaces and base classes, we will create the MongoDB adaptation.

The MongoDB Repository

Implementing the IRepository using the mongodb client and follow the contract will guarantee the interoperability in the application.

Lets add the NoSQLRepository:

import { Collection, Filter, MongoClient, ObjectId, OptionalUnlessRequiredId, UpdateFilter,} from "mongodb";

import {BaseEntity} from "@core/database/BaseEntity";import { CreatePayload, IRepository, UpdatePayload} from "@core/database/IRepository";import { config } from "src/core/configuration/configuration";

export class NoSQLRepository<Entity extends BaseEntity> implements IRepository<Entity> { private readonly tableName: string; private client: MongoClient; protected collection: Collection<Entity>;

constructor(tableName: string) { this.tableName = tableName; this.client = new MongoClient(config.get("DATABASE_URL")); this.collection = this.client.db().collection<Entity>(this.tableName); this.connect(); } // shortened for brevity}We are importing all toys from mongodb driver library to start integrating to the CRUD, then create the NoSQLRepository to implement the IRepository contract. In the constructor we are receiving the tableName or CollectionName in the MongoDB world, having as attributes the client who will manage all connection things and collection will perform the queries. I implemented the private connect() to verify the connection.

private async connect(): Promise<void> { try { await this.client.connect(); this.client.on("error", (error) => { console.error("MongoDB connection error:", error); }); } catch (error) { console.error("Failed to connect to MongoDB:", error); process.exit(1); } }In the constructor we call private method connect(), if the connection cannot be established the application finish with error, the database is a very important dependency we can’t risk start the app if the database connection is not stablish.

Implementing the create() method

async create(data: CreatePayload<Entity>): Promise<Entity> { const now = new Date(); const documentToInsert = { ...data, createdAt: now, updatedAt: now, };

const result = await this.collection.insertOne( documentToInsert as OptionalUnlessRequiredId<Entity> );

return { id: result.insertedId.toHexString(), ...documentToInsert, } as Entity; }Due to limitations of mongodb with autogenerated fields, we will add the date manually, convert the generic Entity into OptionalUnlessRequiredId and return the inserted item.

Implementing the find or Read methods

We perform the collection find method to retrieve all data available in the database and map the result to be compliant with the BaseEntity.

async findAll(): Promise<Entity[]> { const result = await this.collection.find<Entity>({}).toArray(); return result.map((item) => { const { _id, ...rest } = item as any; return { id: _id.toHexString(), ...rest } as Entity; }); }Mongodb provide Filter class that allow add criteria of filter similar to WHERE clause of SQL, but just using an object. Also, we need to convert explicitilythe id into ObjectId since in mongodb are using a string hash instead an integer sequence by default.

async findById(id: string | number): Promise<Entity | null> { const query: Filter<Entity> = { _id: new ObjectId(id) } as Filter<Entity>; const result = await this.collection.findOne(query);

if (!result) { return null; } const { _id, ...rest } = result as any; return { id: _id.toHexString(), ...rest } as Entity; }Implementing the Update

Using the Filter class to prepare the criteria, UpdateFilter to build the document to update, and using the findOneAndUpdate() method that mongodb provides to check if the document exists first and then updates it.

async update(id: string | number, data: UpdatePayload<Entity>): Promise<Entity | null> { const query: Filter<Entity> = { _id: new ObjectId(id) } as Filter<Entity>; const updateDocument: UpdateFilter<Entity> = { $set: { ...data, updatedAt: new Date(), } as Partial<Entity>, };

const result = await this.collection.findOneAndUpdate( query, updateDocument, { returnDocument: 'after' } );

if (!result) { return null; }

const { _id, ...rest } = result as any; return { id: _id.toHexString(), ...rest } as Entity; }Implementing the delete

The deletion is self-explanatory and follow the same patterns of previous implementation

async delete(id: string | number): Promise<boolean> { const query: Filter<Entity> = { _id: new ObjectId(id) } as Filter<Entity>; const result = await this.collection.deleteOne(query); return result.deletedCount > 0; }Adding the database mongodb environment variables

As we did in compose.yml file, we need to update our .env to load that configuration, comment out the SQL engine, add the variables:

# Database SQL#DATABASE_URL=postgresql://postgres:postgres@localhost:5432/postgres#DATABASE_ENGINE=postgres

# Database NoSQL#DATABASE_URL=mongodb://mongo:mongo@localhost:27017/mongo?authSource=admin&replicaSet=rs0#DATABASE_ENGINE=mongodbCreate the NoSQL setup

MongoDB and the NoSQL movement facilitate the schema building and provide flexibility giving some agility in the process to start buidling, in our case we don’t require create schema migration since we will use the agnostic entities in the features folder.

Creating the seeders

Similarly we did for [SQL](previous article) we will replicate the the same idea.

const now = new Date();

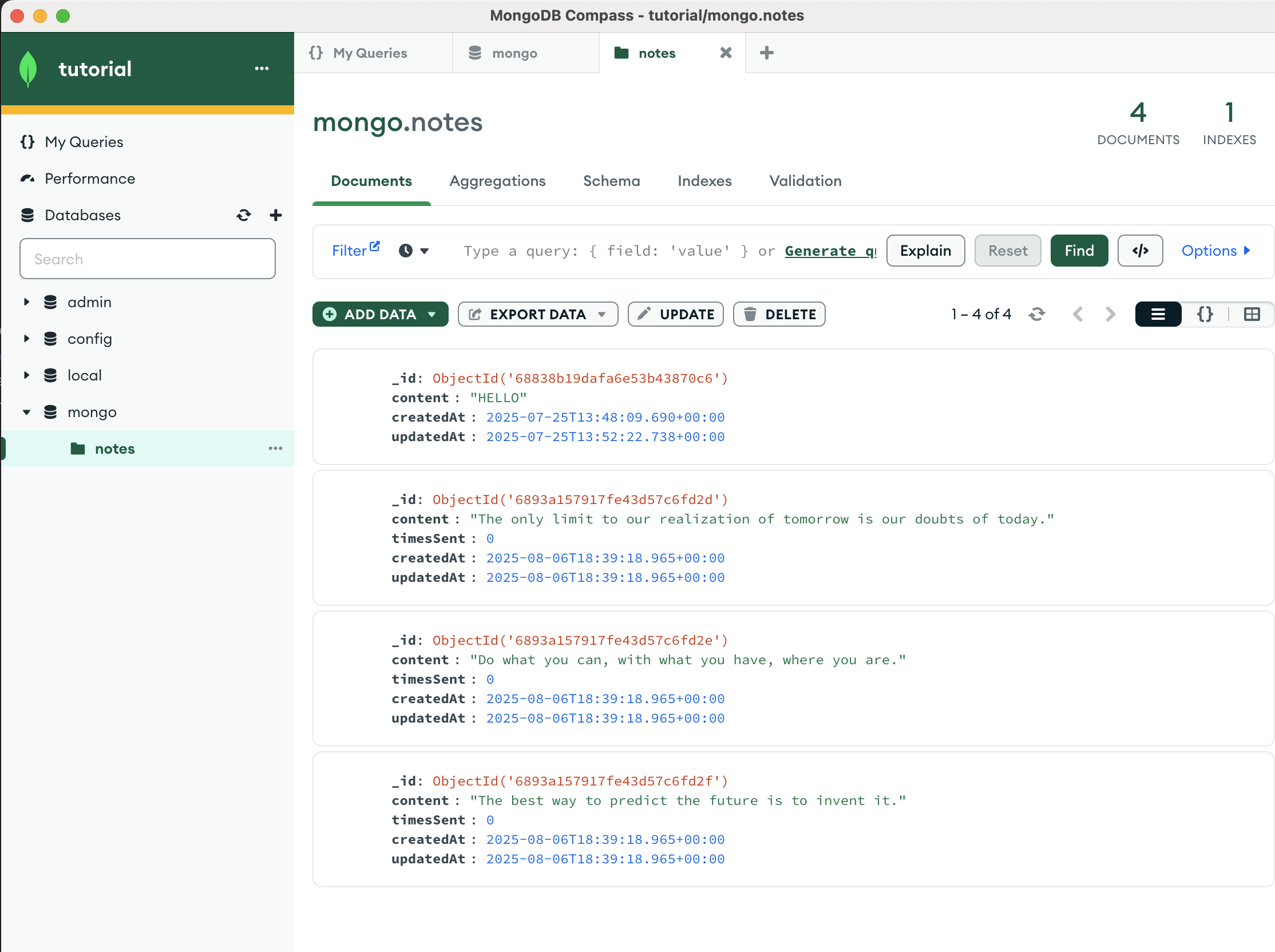

export const notes = { collection: "notes", data: [ { content: "The only limit to our realization of tomorrow is our doubts of today.", timesSent: 0, createdAt: now, updatedAt: now, }, { content: "Do what you can, with what you have, where you are.", timesSent: 0, createdAt: now, updatedAt: now, }, { content: "The best way to predict the future is to invent it.", timesSent: 0, createdAt: now, updatedAt: now, } ],};We will create the script that will run all seeders:

import { MongoClient } from "mongodb";

import { notes } from "@core/database/nosql/seeders/notes";import { config } from "src/core/configuration/configuration";

export class Seeder { private static readonly seeders = [ notes, ];

static async run() { const dbConnection = new MongoClient(config.get("DATABASE_URL")); console.log("Running seeders... ");

try {

await dbConnection.connect();

for (const seeder of Seeder.seeders) { const collection = dbConnection.db().collection(seeder.collection); console.log(`Running seeder: ${seeder.collection}`); await collection.insertMany(seeder.data); } } catch (error) { console.error("error seeding: ", error); } finally { await dbConnection.close(); } }}

Seeder.run();then lets update the script to simplify the process of seeding

"scripts": { ... "seeder": "NODE_OPTIONS=--no-warnings npx ts-node -r tsconfig-paths/register src/core/database/sql/seeder.ts", "seederNoSQL": "NODE_OPTIONS=--no-warnings npx ts-node -r tsconfig-paths/register src/core/database/sql/seeder.ts"},Make sure the docker services are running performing npm run prepare:dev first.

Running npm run seederNoSQL the note items are stored in database, using the Compass mongoDB client, you can see the data created:

Updating the NoteRepository

After creating all the infrastructure for NoSQL, now we need to update our previous strategy in the constructor to choose a database engine depending of the environment variables.

export class NoteRepository implements IRepository<Note> { private DB_COLLECTION_NAME = "notes"; private repository: IRepository<Note>;

constructor() { const dbEngine = config.get("DATABASE_ENGINE");

if (dbEngine === DatabaseEngine.SQL) { this.repository = new SQLRepository<Note>(this.DB_COLLECTION_NAME); } else if (dbEngine === DatabaseEngine.NOSQL) { // To be implemented this.repository = new NoSQLRepository<Note>(this.DB_COLLECTION_NAME); } }// shorten for brevity...}Updating the NoteController

Not really, thanks to the separation of concerns and modularity that were applied, there is no need to update anything, same apply for NoteRestController.

Conclusion

In this example switching between SQL to NoSQL approach just by using a few design patterns and good practices. The REAL need to switch between a technology to another resides in the correct interpretation of the needs of the business, some organizations tend to use both database engines, other require a huge refactoring session to integrate a new database engine because at upfront did not apply good practices. In the inference and AI world a type of database can coexist in the same backend application, we need to deep dive into the needs of the project and take strategic desicions that make the backend easy to change in the long-term and adaptable to the persistency needs. I recommend to read this wonderful hellointerview.com - sql vs nosql article explaining the key differences between them. As usual all the code will be uploaded in the Github Repo for your replication and follow up.

Have you ever faced similar issues when required to implement a database engine at your Back-end applications? - Please let me know in the comments.